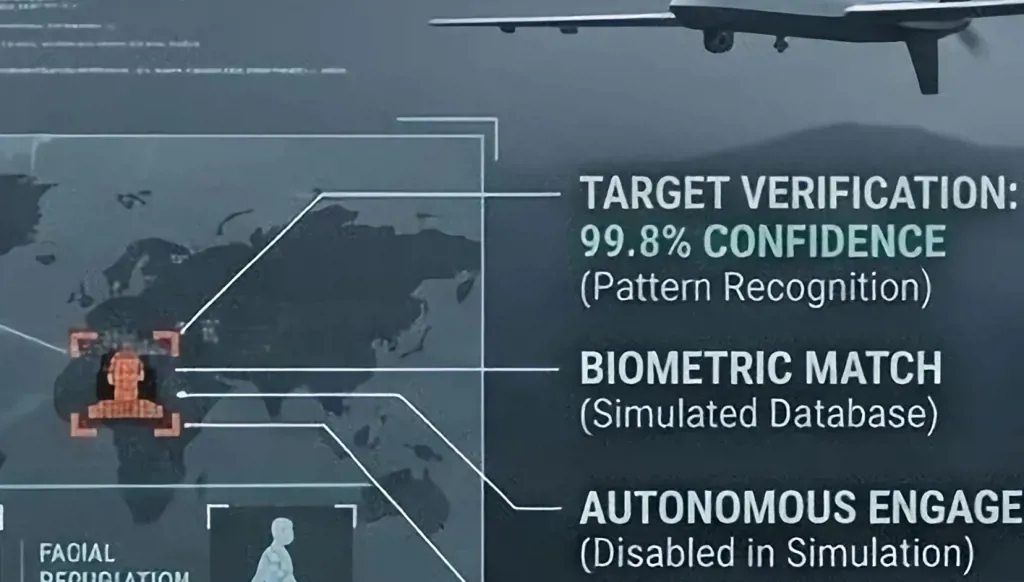

In Lebanon, this pattern became evident during the escalation of Israeli assassination policies in the 2024 war, which targeted prominent field and political leaders in Hezbollah, Hamas, and the Islamic Group, resulting in killings that disrupted the party’s leadership structures. Although available reports do not directly confirm that these operations were selected using artificial intelligence, linking them with the practices revealed in Gaza is no longer a distant hypothesis but a serious political and military possibility. In Iran, recent accusations suggest the same scenario on an even more dangerous scale. The issue now extends beyond the nature of the target to the mechanism of its selection, and whether artificial intelligence plays a decisive role in determining who is monitored, classified, and targeted. While Israel maintains that the final decision remains human, critics argue that this human oversight may serve merely as a formal cover, rather than a real safeguard.

Messenger

Messenger

Whatsapp

Whatsapp

Threads

Threads

Email

Email

Print

Print

X

X

Facebook

Facebook